Perceptual glimpses are locally accumulated and globally maintained at distinct processing levels

Curation statements for this article:-

Curated by eLife

eLife Assessment

This study presents valuable findings on the physiological and computational underpinnings of the accumulation of intermittent glimpses of sensory evidence. The evidence supporting the claims of the authors is solid, although a more exhaustive characterisation of how the different signals interact would have strengthened the study. The work will be of interest to cognitive and systems neuroscientists working on decision-making.

This article has been Reviewed by the following groups

Discuss this preprint

Start a discussion What are Sciety discussions?Listed in

- Evaluated articles (eLife)

Abstract

Making decisions often requires the integration of multiple pieces of information. An extensive body of research has investigated the neural architecture underpinning evidence accumulation in perceptual tasks where information is continuously present, but less is known about how this neural architecture operates in situations affording only intermittent glimpses of an evidence source. In two electroencephalography (EEG) experiments, participants judged the direction of up to two pulses of motion evidence separated by gaps of varying duration. Our behavioural analysis found that participants used both pulses but underutilised the second, and showed no systematic decrease in accuracy as a function of gap duration. At the neural level, motor beta lateralisation tracked cumulative evidence across pulses, maintaining a sustained representation of the decision variable through the gap and until response. In contrast, the centroparietal positivity (CPP), a previously-characterised signature of evidence accumulation, built up transiently to a peak that scaled with each pulse’s contribution to the decision variable (i.e. the absolute belief update it produced), falling back to baseline in between pulses. These patterns were recapitulated in a model where pulse-information transiently integrated at the CPP level is fed to and maintained at a bounded motor level, so that information presented later on in a trial is integrated only if a bound has not yet been reached.

Article activity feed

-

-

-

eLife Assessment

This study presents valuable findings on the physiological and computational underpinnings of the accumulation of intermittent glimpses of sensory evidence. The evidence supporting the claims of the authors is solid, although a more exhaustive characterisation of how the different signals interact would have strengthened the study. The work will be of interest to cognitive and systems neuroscientists working on decision-making.

-

Reviewer #1 (Public review):

Summary:

This paper characterises the physiological and computational underpinnings of the accumulation of intermittent glimpses of sensory evidence, with a focus on the centroparietal positivity and motor beta lateralization. The main finding is that the centroparietal positivity builds up during evidence accumulation but falls back to baseline during gaps, while motor beta lateralization maintains a continuous a sustained representation throughout the gap and until response.

Strengths:

- Elegant combination of electroencephalography and computational modelling.

- Innovative task design, including parametric manipulation of gap duration.

- The authors describe results of two separate experiments, with very similar results, in effect providing an internal replication.

Weaknesses:

- A direct characterization …

Reviewer #1 (Public review):

Summary:

This paper characterises the physiological and computational underpinnings of the accumulation of intermittent glimpses of sensory evidence, with a focus on the centroparietal positivity and motor beta lateralization. The main finding is that the centroparietal positivity builds up during evidence accumulation but falls back to baseline during gaps, while motor beta lateralization maintains a continuous a sustained representation throughout the gap and until response.

Strengths:

- Elegant combination of electroencephalography and computational modelling.

- Innovative task design, including parametric manipulation of gap duration.

- The authors describe results of two separate experiments, with very similar results, in effect providing an internal replication.

Weaknesses:

- A direct characterization of how the centroparietal positivity and motor beta lateralization interact is missing, which limits the novelty. In their reply to reviewers, the authors argue that the signal-to-noise ratio of EEG signals is insufficient for such analyses at the single-trial level. If so, a binned or trial-averaged approach could still be attempted.

- An exhaustive characterisation of sensors and frequency bands is also missing. In their reply to reviewers, the authors suggest that this would detract from their hypothesis-driven focus. I disagree: the main hypothesis and figures could remain centred on the centroparietal positivity and motor beta lateralization, with a more comprehensive mapping of sensors and frequencies placed in supplementary material. Since the purpose of the paper is to examine EEG-based decision signals in a novel behavioural context, a broader characterisation of the underlying EEG landscape would seem appropriate.

-

Reviewer #2 (Public review):

Summary:

This manuscript examines decision-making in a context where the information for the decision is not continuous, but separated by a short temporal gap. The authors use a standard motion direction discrimination task over two discrete dot motion pulses (but unlike previous experiments, fill the gaps in evidence with 0-coherence random dot motion of differently coloured dots). Previous studies using this task (Kiani et al., 2013; Tohidi-Moghaddam et al., 2019; Azizi et al., 2021; 2023) or other discrete sample stimuli (Cheadle et al., 2014; Wyart et al., 2015; Golmohamadian et al., 2025) have shown decision-makers to integrate evidence from multiple samples (although with some flexible weighting on each sample). In this experiment, decision-makers tended not to use the second motion pulse for their …

Reviewer #2 (Public review):

Summary:

This manuscript examines decision-making in a context where the information for the decision is not continuous, but separated by a short temporal gap. The authors use a standard motion direction discrimination task over two discrete dot motion pulses (but unlike previous experiments, fill the gaps in evidence with 0-coherence random dot motion of differently coloured dots). Previous studies using this task (Kiani et al., 2013; Tohidi-Moghaddam et al., 2019; Azizi et al., 2021; 2023) or other discrete sample stimuli (Cheadle et al., 2014; Wyart et al., 2015; Golmohamadian et al., 2025) have shown decision-makers to integrate evidence from multiple samples (although with some flexible weighting on each sample). In this experiment, decision-makers tended not to use the second motion pulse for their decision. This allows the separation of neural signatures of momentary decision-evidence samples from the accumulated decision-evidence. In this context, classic electroencephalography signatures of accumulated decision-evidence (central-parietal positivity) are shown to reflect the momentary decision-evidence samples.

Strengths:

The authors present an excellent analysis of the data in support of their findings. In terms of proportion correct, participants show poorer performance than predicted if assuming both evidence samples were integrated perfectly. A regression analysis suggested a weaker weight on the second pulse, and in line with this, the authors show an effect of the order of pulse strength that is reversed compared to previous studies: A stronger second pulse resulted in worse performance than a stronger first pulse (this is in line with the visual condition reported in Golmohamadian et al., 2025). The authors also show smaller changes in electrophysiological signatures of decision-making (central parietal positivity, and lateralised motor beta power) in response to the second pulse. The authors describe these findings with a computational model which allows for early decision-commitment, meaning the second pulse is ignored on the majority of trials. The model-predicted electrophysiological components describe the data well. In particular, this analysis of model-predicted electrophysiology is impressive in providing simple and clear predictions for understanding the data.

Weaknesses:

Some readers may be left questioning why behaviour in this experiment is so different from previous experiments which use almost exactly the same design (Kiani et al., 2013; Tohidi-Moghaddam et al., 2019; Azizi et al., 2021; 2023). Overall performance in this experiment was much worse than previous experiments: Participants achieved ~85% correct following 400 ms of 33 - 45% coherent motion. In previous work, performance was ~90% correct following 240ms of 12.8% coherent motion. A second weakness is that, while the authors present a model which describes the data based on pre-mature decision-commitment, they do not examine explanations from the existing literature, that evidence is flexibly weighted, and do not provide any analyses which could be used to compare these descriptions. While their model can describe the data in this manuscript, it cannot explain the data from previous experiments showing a stronger weight on the second pulse.

-

Author response:

The following is the authors’ response to the original reviews.

Public Reviews:

Reviewer #1 (Public review):

Summary:

This paper aims to characterise the physiological and computational underpinnings of the accumulation of intermittent glimpses of sensory evidence.

Strengths:

(1) Elegant combination of electroencephalography and computational modelling.

(2) The authors describe results of two separate experiments, with very similar results, in effect providing an internal replication.

(3) Innovative task design, including different gap durations.

Weaknesses:

(1) The authors introduce the CPP as tracking an intermediary (motor-independent) evidence integration process, and the MBL as motor preparation that maintains a sustained representation of the decision variable. It would help if the authors could more directly and …

Author response:

The following is the authors’ response to the original reviews.

Public Reviews:

Reviewer #1 (Public review):

Summary:

This paper aims to characterise the physiological and computational underpinnings of the accumulation of intermittent glimpses of sensory evidence.

Strengths:

(1) Elegant combination of electroencephalography and computational modelling.

(2) The authors describe results of two separate experiments, with very similar results, in effect providing an internal replication.

(3) Innovative task design, including different gap durations.

Weaknesses:

(1) The authors introduce the CPP as tracking an intermediary (motor-independent) evidence integration process, and the MBL as motor preparation that maintains a sustained representation of the decision variable. It would help if the authors could more directly and quantitatively assess whether their current data are in line with this. That is, do these signals exhibit key features of evidence accumulation (slope proportional to evidence strength, terminating at a common amplitude that reflects the bound)? Additionally, plotting these signals report locked (to the button press) would help here. What do the results mean for the narrative of this paper?

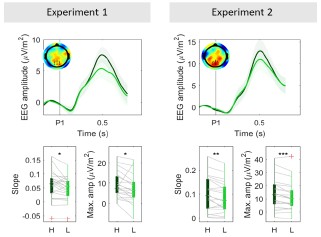

The reviewer is correct that properties such as temporal slope scaling with evidence strength and stereotyped threshold-like amplitude were key in establishing that the CPP reflects evidence accumulation in conventional continuous-stimulus tasks, and its motor independence was demonstrated in how it exhibited the same evidence-dependent dynamics in the absence of motor requirements (e.g. O'Connell et al 2012). We agree that it is of interest to check any such properties that can be feasibly tested in the current, distinct task context of intermittent evidence with delayed responses. Given the way in which participants performed our delayed-response task, sometimes terminating decisions early, it is in the CPP-P1 that conventional patterns of coherence-dependence in slope and amplitude would be expected. Indeed, we found that the CPP-P1 reached higher amplitudes (Fig. 3A, Author response image 1) and exhibited a steeper build up in high- compared to low-coherence trials (Author response image 1). The slope and amplitude profile of the CPP-P2 is complex due to the variability in baseline activity across our various delay conditions and the bounded process that participants engaged in, but it is still consistent with an accumulation process. Our simulations provide a full account of how an accumulating signal could produce the observed results.

Author response image 1.

Grand-averaged (± sem) CPP-P1 traces in both experiments (top). Bottom boxplot graphs indicate the average slope computed as the slope between 0.2 s post P1 onset (when CPP begins its buildup) and the time when peak amplitude was reached within the [0.4-0.6s] interval, computed for each subject individually. Red crosses indicate outliers, computed as values exceeding 1.5 times the interquartile range away from the bottom or top of the box. Grey lines indicate single subject estimates, and asterisks reflect the significance of paired ttests for the estimated slope and amplitude effects; **p<0.01, *p<0.05. H = high coherence, L = low coherence.

Like in other delayed-response tasks (Twomey et al 2016; McCone et al 2026), we observe here that the CPP peaks and falls well before the response is cued or indeed executed (here, in fact peaking and falling for each individual pulse). Thus, its pre-response dynamics will not relate to stimulus-driven evidence accumulation in the way they do in immediate response contexts (e.g. O’Connell et al. 2012; Steinemann et al. 2018). We therefore do not analyse response-aligned CPPs in the experiment.

As to the intermediary role we have interpreted for the CPP, in addition to the local pulse driven peak-and-fall dynamics compared to the sustained profiles of motor preparation signals, we can point to the obvious temporal delay between the signals, where evidence-dependent buildup in the CPP substantially precedes that of motor preparation, as observed in all previous studies comparing the two (e.g. Kelly & O'Connell 2013).

(2) The novelty of this work lies partly in the aim to characterize how the CPP and MBL interact (page 5, line 3-5). However, this analysis seems to be missing. E.g., at the single-trial level, do relatively strong CPP pulses predict faster/larger MBL? The simulations in Figure 5 are interesting, but more could be done with the measured physiology.

As exemplified in the extant EEG-decision literature, the low signal-to-noise ratio of EEG is such that attempts are seldom made to link two EEG signals on a single-trial basis, and studies instead favour testing single-trial relationships between each individual EEG signal and behaviour, or, most commonly, comparing patterns of variation in the EEG signals across experimental conditions (e.g. difficulty). Accordingly, here we show that trials with high coherence P1 evoked 1) higher CPP amplitudes (Fig. 3A,C), and 2) stronger MBL (Fig. S2 & S3). Further, we showed that particularly high CPP amplitudes following the first pulse led to stronger weights on choice for the first pulse (Fig. S11), which could only be mediated by the motor system.

(3) The focus on CPP and MBL is hypothesis-driven but also narrow. Since we know only a little about the physiology during this "gaps" task, have the authors considered computing TFRs from different sensor groupings (perhaps in a supplementary figure?).

While we agree that it might be interesting to explore frequency bands and sensors more broadly, we feel that such an exploration would detract from the hypothesis-driven focus on how prominent, well-characterised decision signals in the brain behave in a context where evidence is presented in an atypical, seldom-studied manner, namely in the form of temporally separate pulses. Our aim was not to explore whole-brain dynamics that might be engaged during the task, but rather to get a better understanding of the functional roles of the neural processes underlying the CPP and MBL during decision making. Providing a detailed description of whole-scalp responses is thus beyond the scope of this paper, but given that all data will be made publicly available this can be pursued in future work and by other researchers.

(4) The idea of a potential bound crossing during P1 is elegant, albeit a little simplistic. I wonder if the authors could more directly show a physiological signature of this. For example, by focusing on the MBL or occipital alpha split by the LL, LH, HL and HH conditions, and showing this pulse- as well as report-locked. Related, a primacy effect can also be achieved by modelling (i) self-excitation of the current one-dimensional accumulator, or (ii) two competing accumulators that produce winner-take-all dynamics. Is it possible to distinguish between these models, either with formal model comparison or with diagnostic physiological signatures?

In addition to the CPP amplitude effects we report in the main paper, the reviewer is correct that pulse-locked MBL can also provide a physiological signature of the greater number of pulse-1 bound crossings when that pulse is high-coherence. This is shown in Figure S3, where we see this coherence-dependent effect consistently across all gap durations and both experiments. Figure S2 also shows that the MBL step-change after P2 is greater in P1-low coherence trials in Experiment 1, as predicted by the bound-crossing account, and consistent with the CPP findings. We note that this effect appears absent in Experiment 2, but this is likely because the greater proportion of shorter gap durations (0, .12, .36s) mean that updates following P2 are likely to still capture P1-driven changes, due to signal-transmission delays. Please also note that Fig. S2 and S3 have been updated from the previous version, because while revising the paper we noticed a mistake whereby we were plotting alpha band power (813Hz) rather than the intended beta (13-30Hz). The results remain qualitatively unchanged. Although there isn’t sufficient single-trial signal-to-noise ratio to be able to categorise individual trials as having crossed a threshold or not, this is strong evidence in support of the coherence dependent amplitudes of the CPP and motor updates. Analyzing beta locked to the report would not be informative in this case because of the delayed reporting structure of the task and the threshold-crossing relationship beta exhibits with response execution (O’Connell et al. 2012). That is, beta will reach the same amplitude immediately prior to the response regardless of whether or not decisions were terminated during P1. Instead, we believe that the empirical CPP-P2 traces we show provide direct evidence that the second pulse was not fully integrated in all trials, and as our modelling confirms, this is consistent with bound crossings occurring sometimes before P2. First, the fact that CPP-P2 amplitudes were overall lower than CPP-P1 amplitudes mirrors the behavioural observation that the first pulse had a stronger weight on choice than the second one. Second, we show that trials where the CPP was particularly high after the first pulse were also trials where P1 also exerted a particularly strong influence on choice (see Fig. S11), further validating the idea that higher CPP amplitudes are directly related to behaviour.

Regarding self-excitation (SE) and winner-take-all competition (WTAC), these could indeed contribute to the behavioural primacy effects, but they would not detract from our central finding that the CPP does not encode a sustained representation of a decision variable, but rather reflects two rounds of evidence accumulation feeding into a single decision process. Further, it is not immediately clear whether/how these alternative models might also account for the CPP-P1/CPP-P2 results as simply as our bounded model does. While it might be theoretically possible for SE/WTAC models to explain 1) why the CPP-P2 is generally lower than the CPP-P1 across conditions, and 2) why the maximum CPP-P2 amplitudes in P1-high trials are smaller than in P1-low trials, these patterns of results are not an immediate consequence of standard implementations. Further, while the question of whether the accumulation process is perfect integration or involves SE or WTAC is certainly of additional interest, given that this is a delayed response task and does not provide information on termination timing through RT distributions, arbitrating between these modes of integration would not be straightforward with the current data.

(5) The way the authors specify the random effects of the structure of their mixed linear models should be specified in more detail. Now, they write: "Where possible, we included all main effects of interest as random effects to control for interindividual variability." This sounds as if they started with a model with a full random effect structure and dropped random components when the model would not converge. This might not be sufficiently principled, as random components could be dropped in many different orders and would affect the results. Do all main results hold when using classical random effects statistics on subject-wise regression coefficients?

The equations in the paper include the full details of the random effects structure we used for each model. We note that only two of our four equations did not include a full random effect structure, indeed due to convergence issues. We have now fit these models with a maximal random effects structure (i.e. including all fixed effects as random effects as well) with the ‘bobyqa’ optimiser. This resulted in singular fits for both Eq. 2 (Exp. 1 and Exp. 2) and Eq. 3 (Exp. 2 only). Following previous suggestions, we used a weakly informative wishart prior (Chung et al. 2015) to regularise the random effects covariance matrix using the blme package (Chung et al. 2013), which resolved the singular fit problem. However, the model still produced convergence warnings in some models. To assess these models’ robustness, we compared the fixed effect parameter estimates across multiple optimisers, as suggested by the lme4 developers (see lm4 documentation). Parameter estimates across optimisers rarely deviated by more than one decimal point across 6 optimisers (see Bates et al. 2011), and we thus concluded the model estimates were robust and convergence warnings were a false positive, a known issue in lme4. For all models in the paper, we report the parameters estimated using the “bobyqa” optimiser. All main inferential results remain unchanged (except for one interaction that was not of interest in Exp. 1), and the estimated slopes and statistical results for all models have been updated in the manuscript. We also included all these details in the manuscript.

Reviewer #2 (Public review):

Summary:

This manuscript examines decision-making in a context where the information for the decision is not continuous, but separated by a short temporal gap. The authors use a standard motion direction discrimination task over two discrete dot motion pulses (but unlike previous experiments, fill the gaps in evidence with 0-coherence random dot motion of differently coloured dots). Previous studies using this task (Kiani et al., 2013; Tohidi-Moghaddam et al., 2019; Azizi et al., 2021; 2023) or other discrete sample stimuli (Cheadle et al., 2014; Wyart et al., 2015; Golmohamadian et al., 2025) have shown decision-makers to integrate evidence from multiple samples (although with some flexible weighting on each sample). In this experiment, decision-makers tended not to use the second motion pulse for their decision. This allows the separation of neural signatures of momentary decision-evidence samples from the accumulated decision-evidence. In this context, classic electroencephalography signatures of accumulated decision-evidence (central-parietal positivity) are shown to reflect the momentary decision-evidence samples.

Strengths:

The authors present an excellent analysis of the data in support of their findings. In terms of proportion correct, participants show poorer performance than predicted if assuming both evidence samples were integrated perfectly. A regression analysis suggested a weaker weight on the second pulse, and in line with this, the authors show an effect of the order of pulse strength that is reversed compared to previous studies: A stronger second pulse resulted in worse performance than a stronger first pulse (this is in line with the visual condition reported in Golmohamadian et al., 2025). The authors also show smaller changes in electrophysiological signatures of decision-making (central parietal positivity and lateralised motor beta power) in response to the second pulse. The authors describe these findings with a computational model which allows for early decision-commitment, meaning the second pulse is ignored on the majority of trials. The model-predicted electrophysiological components describe the data well. In particular, this analysis of model-predicted electrophysiology is impressive in providing simple and clear predictions for understanding the data.

Weaknesses:

Some readers may be left questioning why behaviour in this experiment is so different from previous experiments, which use almost exactly the same design (Kiani et al., 2013; TohidiMoghaddam et al., 2019; Azizi et al., 2021; 2023). The authors suggest this may be due to the staircase procedure used to calibrate the coherence of (single-pulse) dot motion stimuli for individuals at the start of the experiment. But it remains unclear why overall performance in this experiment is so bad. Participants achieved ~85% correct following 400 ms of 33 - 45% coherent motion. In previous work, performance was ~90% correct following 240ms of 12.8% coherent motion. It seems odd that adding the 0% coherent motion in the temporal gaps would impair performance so greatly, given it was clearly colour-coded. There is a lack of detail about the stimulus presentation parameters to understand whether visual processing explains the declined performance, or if there is a more cognitive/motivational explanation.

We thank the reviewer for highlighting this. We apologise for not providing full details about the visual display, which we have included now.

The moving dots were presented centrally on the monitor, at a 5 degree aperture, and moving at a speed of 5 degrees/second. The monitor refresh rate was 60Hz for 19 participants and 85Hz for 3 participants in Experiment 1, while it was 85Hz for 19 participants and 60Hz for 2 participants in Experiment 2. Dot density in our task was similar to previous studies (16.7 dots/degree/s2, as in Kiani & Shadlen 2013; Tohidi-Moghaddam et al. 2019; Azizi et al. 2021, 2023). However, in contrast to previous studies, we did not include any feedback on a trial-bytrial basis, instead only providing feedback at the end of each block indicating the average accuracy. This would have made it harder for participants to continually assess how well they were performing and to adjust their strategies (e.g. increase their bound for better accuracy) accordingly. We agree that the inclusion of 0% coherence dots during the gap between pulses is unlikely to have caused the participants’ relatively low overall performance, especially since we did not find accuracy to be overall lower for longer 0%-coherence gaps.

Further, as the reviewer notes, we used a staircasing procedure at the beginning of the experiment which used only single pulses of evidence. This may have encouraged participants to set a bound that can usually be reached by one pulse, and the resultant early terminations meant that they seldom used the full 400ms of evidence that were available to them. In fact, we would like to thank the reviewer for pointing out Golmohamadian et al., 2025, which used a similar variable delays task structure but with different visual stimuli. They, like us, trained on a single-pulse task version and omitted trial-by-trial feedback in the main task, and, also like us, reported a stronger choice reliance on pulse-1. This suggests that these two factors may suffice to induce a primacy rather than a recency effect.

There are other reasons why performance may have been different in our task compared to previous studies. For example, our task included a lead-in period that was longer than in previous studies and contained 0%-coherence dots, in order to minimise interfering VEP components (the lead in period was between 700 to 1050ms in our study, compared to 200– 500 ms in Kiani & Shadlen 2013; Tohidi-Moghaddam et al. 2019 & Azizi et al. 2023, and 400 -1000 ms in Azizi & Ebrahimpour 2021). This longer and visually explicit preparation period may have acted as a warning cue, allowing participants to fully prepare before the first pulse, and again making it easier for them to hit a bound with only that information.

We have added a more detailed discussion about how our stimuli and the task characteristics may have resulted in a substantially different performance in our task compared to previous studies in the discussion section.

Recommendations for the authors:

Reviewing Editor:

Please consider the following reviewer suggestions for how to strengthen the evidence for your central claims, which could translate into an improved assessment of the "strength of evidence".

Apart from these useful suggestions, I had some concerns about scholarship, because the list of studies currently cited in your introduction is exclusively from your group, while one of the phenomena of interest - motor beta power lateralization (MBL) in decision-making - has been widely studied by several groups, using also other techniques.

I was wondering why you chose not to cite the ample MEG evidence for the role of MBL in decision-making. This has been shown both in classical random dot motion tasks (Donner et al, Curr Biol, 2009; de Lange et al, J Neurosci, 2013; Pape et al, Nat Commun, 2016; Urai et al, Nat Commun, 2022) as well as in tasks involving discrete evidence samples (Wilming et al, Nat Commun, 2020; Murphy et al, Nat Neurosci, 2021). Another relevant EEG study is by Ian Gould et al, J Neurosci, 2010. There is also quite a bit of monkey LFP work (mainly by Saskia Haegens) on choice-selective beta power in the motor system of the macaque, although the link to the lateralized beta power suppression in your work and the above human E/MEG studies remains a bit elusive. I feel it would be important to provide a more balanced reflection of the existing literature on this phenomenon.

We thank the editor for this fair comment, and we apologise for having provided a too narrow, EEG-centric view of the literature, arising from our interest in the CPP component which hasn’t yet been characterised in MEG or LFPs. We have now substantially expanded the introduction to provide a more balanced and comprehensive overview of the literature.

Reviewer #1 (Recommendations for the authors):

(1) The diffusion model needs to be explained in more detail. For example, it should be explicitly stated that the model was fit to only choices, as most readers would expect reaction times. Further, it needs to be started if the model was fit separately for each subject or in one go to the group-level data. If the former, it is important to add error bars of the betweensubjects variability (in simulated and empirical data) to Figure 4A. If the latter, it would be important to determine uncertainty using bootstrapping.

The original model was fit to grand-average data, as stated in the methods section. To assess between-subjects variability, we have re-fitted the model to each individual subject, for each experiment. The average of the individually-estimated model parameters closely recapitulated the values obtained from the fit to grand-averaged data (Fig. S12). We then simulated N = 10000 trials for each individual, and we report the grand-averaged results with error bars indicating the standard error of the mean as a supplementary figure (Fig. S13). The results replicate the ones reported in the main manuscript. We have also made it explicit that the models are fit to accuracy data but not RT.

(2) The authors write numerous times that the MBL exhibits an "evidence-dependent" buildup. However, should this not be "choice-dependent"? In Figure 2A, one can clearly see that the sign of MBL follows choice and not objective evidence.

We thank the reviewer for this comment. By evidence-dependent, we mean that lateralisation towards the correct response is strongest in high-coherence trials (see Fig. S2, S3). This is indeed because the sign of MBL is choice-dependent, and participants are less likely to make mistakes in high-coherence trials. We have added a clarification sentence in the text.

(3) It would aid readability to add sub-conclusions at the end of each Results section.

We have added clarifications where needed.

(4) In Figure 1B, I cannot see a dashed line for the HL condition. I understand that it must lie under the LH condition, but it would be good to show it separately.

We thank the reviewer for this comment. Since we cannot show both lines separately without additional panels, given the HL and LH lines perfectly overlap, we indicate at the end of the caption that this is the case as follows: “Note that a perfect accumulator predicts identical accuracies for the HL and LH conditions, and therefore the two lines overlap.”

(5) In Figure 4B, is the horizontal dashed line important? It is confusing because the legend incorrectly states that this is "data".

Thanks for this observation - it was only there to indicate a 50% as a benchmark to assess how frequent early terminations are, but we agree that it was unnecessary and potentially confusing, so we have removed it from the plot.

Reviewer #2 (Recommendations for the authors):

(1) The authors should more directly address how behaviour in their task differs quite substantially from previous experiments with very similar designs (including why such high coherence levels are required, over a longer duration, to reach overall worse performance). Some readers may also be interested in a broader discussion of how decision-makers may use flexible weights when integrating evidence across samples over time. While the explanation of bounded accumulation is convincing in this context, Tsetsos et al., (2012) suggest recency effects (as in Cheadle et al., 2014; Wyart et al., 2015) cannot be explained by bounded accumulation, but rather integration leak. Other factors may include stimulus consistency (Glickman et al., 2022) or even choice consistency across decisions (Bronfman et all., 2015). Golmohamadian et al., 2025 demonstrated flexibility in decision strategies across sensory modalities.

As we described above, we have added some more detailed explanation about why it might be the case that behaviour in our study differs from previous reports using similar tasks. We agree that the reversed pulse-reliance in our study compared to others presents an opportunity to discuss flexibility in decision strategy and so we have now added a broader discussion on different patterns of integration in various task contexts. We thank the reviewer for pointing out Golmohamadian et al., 2025, as they, like us, trained on a single-pulse task version and omitted trial-by-trial feedback in the main task, and, like us, reported a stronger choice reliance on pulse-1.

(2) Another open question is how central parietal positivity reflects an accumulation signal in the case of continuous evidence, but reflects momentary evidence in the case of discrete evidence samples. If, in both cases, the parietal evidence is passed along to motor processes for bounded decision commitment, how do motor processes deal with the changes in what is represented? Can the relationship between MBL and CPP in the model-simulated data shed some light on this? Specifically, how is the 0-gap condition treated in this simulation (which shows only 1 CPP peak but with a longer time to decay) compared to non-zero gap conditions (which show 2 peaks)?

This is a very interesting and important point, and we thank the reviewer for raising it. We believe that the CPP in our intermittent-dots task reflects dot-motion evidence integration in the same way as in conventional continuous evidence tasks, building at an evidence dependent rate (see Author response image 1), with the only difference being that integration processes can be turned “on” or “off” depending on whether evidence is present, and can thus be temporally split into multiple “rounds” of accumulation when there is a gap.

Our model simulations assume that evidence integration is triggered by the dots turning yellow, indicating the presence of evidence, and feeds continuously to the motor system in these periods. However, it is switched off either when 1) a bound has been hit, or 2) the dots turn blue again, at which point the CPP falls (see various rates of signal decay in Fig. S7). The reason the CPP continues longer before it peaks and falls in the zero-gap condition, by this account, is because there is no dot-colour change at the end of pulse-1 to switch it off, and thus the accumulation process continues until either a bound is hit, or the yellow dots turn blue after pulse-2. When there is a non-zero gap, despite the CPP being switched off, the decision variable itself remains encoded at the motor level so that no information is lost. This requires that the same instruction that turns-off the CPP must also break or pause the flow from the CPP to the motor level and allow it to hold its current level until either a second pulse resumes a feed from a newly-triggered CPP, or response execution is cued. Thus, in our account, the accumulation process underlying the CPP in our intermittent-evidence task is identical to conventional continuous-evidence tasks, but since it can be turned “on” and “off” as a function of whether or not evidence is clearly present or absent, produces two “rounds” of integration in non-zero gap conditions. The motor process also receives a feed from the CPP as in conventional continuous-evidence tasks, but with this feed similarly gated by the presence of evidence.

A slightly different and perhaps more challenging question (which the reviewer was perhaps alluding to) relates to tasks where evidence comes not in short noisy snippets, but rather as static tokens (e.g. Wyart et al. 2012, 2015; Murphy et al. 2021; Parés-Pujolràs et al. 2025). In these instances, the CPP exhibits transient evoked responses to each token, which scale with the belief updates resulting from it (Parés-Pujolràs et al. 2025). However, it remains unclear whether these transient potentials reflect a temporally-evolving integration process to compute the appropriate belief update afforded by that token in the context of a particular task, or rather reflect the output of such a process. The former account would be similar to our interpretation of the transient deflections observed in this gaps task, which we believe capture the same temporal integration processes as those commonly observed in conventional continuous noisy stimuli paradigms, only short-lived. The latter account would instead be specific to low-noise stimuli like tokens, where the computations required for belief updating may not require a temporally-extended integration process, but rely on different mechanisms to compute belief updates (e.g. prior-based modulations of sensory encoding, attention or neural gain). These questions remain open for future investigation.

(3) From what I understand, the model suggests all-or-none integration of the second pulse: either the bound has not been reached and the pulse is perfectly integrated, or the bound has been reached and so the pulse is not integrated. The CPP amplitude at pulse 2 is therefore determined not only by the strength of the evidence at pulse 2 but also by the proportion of trials where the evidence is not ignored: CPP at pulse 2 is of lower amplitude because it is calculated as an average across trials where it is either similar to CPP at pulse 1 or otherwise completely absent. Another explanation for the lower average amplitude is that all trials have a smaller amplitude (somewhat different from the main conclusions of the paper). It would be nice to show the dichotomy predicted by the model in the empirical data. I'm thinking of something similar to this 'bifurcation' analysis from Sergent et al., 2021. Or more simply, estimates of CPP amplitude from single trials (perhaps an average over a short window around the peak) should be more variable at pulse 2, with some reaching similar amplitudes to pulse 1, and many close to baseline, whereas at pulse 1, there should be a more uniform cluster of amplitudes. If all CPP peak amplitudes were lower, would this motivate a model comparison where, for example, additional evidence from the second pulse was down-weighted according to certainty following the first pulse (leading to all trials down-weighting the second pulse)? This could link in nicely with some of the more nuanced analyses related to attention in the supplementary figures.

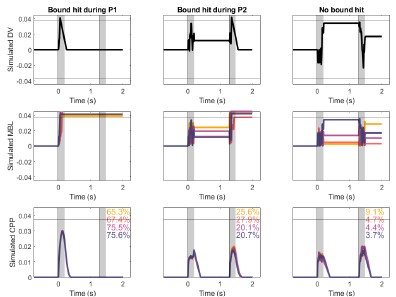

We thank the reviewer for this insightful comment, which will help us clarify how our model works. The integration of the second pulse does not work in an all-or-none manner. In our model, the accumulation stops whenever a bound is reached at the downstream motor level. This can happen 1) at some point during the 1st pulse (no integration of pulse 2 at all), 2) during the 2nd pulse (partial integration of pulse 2, until the bound is hit), or 3) not crossed at all (full integration of pulse 2). Our model thus allows for partial integration of the second pulse rather than all-or-none. Author response image 2 shows 3 example trials that illustrate how the model works. The CPP amplitudes at pulse 2 are thus determined by two main factors: 1) whether or not accumulation of P2 is precluded by an earlier bound crossing in P1 (if it is, the CPP amplitude is assumed to equal 0), and 2) whether and when accumulation ended if it did take place. Our interpretation is that, given that trials where pulse 1 was low coherence were 1) less likely to terminate early (Fig. 4B) and 2) had achieved lower levels of accumulated evidence (Fig. 4C), the LL and LH conditions are linked to a higher proportion of trials where accumulation at pulse 2 does occur, and it lasts for a longer amount of time because the distance required to reach a bound is longer than in their pulse 1 high-coherence counterparts. We have clarified this point in the results section describing the model.

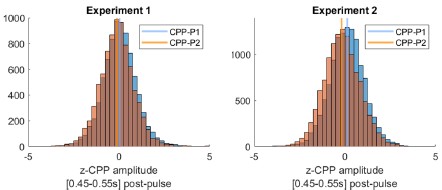

The reviewer notes: “Another explanation for the lower average amplitude is that all trials have a smaller amplitude (somewhat different from the main conclusions of the paper)”. However, our interpretation in fact predicts that the vast majority of trials should indeed exhibit smaller amplitudes. That can again be explained by the three trial types mentioned above. Unlike in CPP-P1, there would be a majority of trials where integration does not occur at all. Only trials where evidence was at least partially integrated during P2 would be predicted to have CPPP2 amplitudes that are overall positive, and even in those instances, average amplitudes would be overall lower than CPP-P1 in trials that terminated early, because of the lower distance remaining to be covered before hitting a bound. Author response image 2 illustrates this point. Thus, the prediction regarding how CPP amplitude variance or distribution shape would compare between P1 and P2 is less straightforward than if it were all-or-none on P2, not to mention the fact that EEG noise would likely drown-out distributional features like this. We therefore focus on a comparison of the means, for which our model has the clear prediction that most trials should exhibit lower CPP-P2 amplitudes. To assess whether empirical observations meet this prediction, and following the reviewer’s suggestion, we extracted the mean amplitudes around 0.45-0.55s after P1 and P2, for each single trial. CPP-P2 data were baselined using the amplitude 100 ms before P2 onset, as in Fig. S5 - note that this is likely to introduce spurious drifts due to overlapping potentials from P1, but given that grand averaged traces still qualitatively captured the key effects we assume it is a valid approach. We then pooled CPP-P1 and CPP-P2 amplitudes across pulses, and z-scored them for each participant separately. In both experiments, in a majority of participants (Exp. 1: 16/22, Exp. 2: 17/21) the median z-CPP-P1 amplitude was higher than that of z-CPP-P2. Author response image 3 illustrates the pooled distributions.

Author response image 2.

Decision variable simulations illustrating sample single trials (top) and CPP traces averaging data across conditions and N = 1000 trials (bottom), using model fits from Exp 2, in the long gap condition. Overlaid text indicates the percentage of trials in each subset, for each condition. The horizontal line indicates the bound; shaded areas indicate pulse presentation times. A. The bound was hit during P1, and therefore no further accumulation occurred during P2. B. The bound was hit during P2, and therefore P2 was only partially accumulated, C. No bound was hit, and therefore all evidence from P2 was accumulated.

Author response image 3.

Pooled CPP–P1 and CPP-P2 amplitudes [450-550ms post-pulse] distributions, normalised within-participant, and baselined 100ms before pulse onset. In both experiments, CPP-P2 amplitudes had a lower median (vertical line) normalised amplitude than CPP-P1.

(4) A minor note: Full details of stimulus presentation (size, number of dots, dot size, speed, lifetime) would be appreciated.

Thank you - we have now provided these details in the methods section (see also reply to public reviews above).

(5) Are the authors sure they want to use this 'Gaps task' name? It seems a bit strange to introduce this name in this context, where there isn't really a 'Gap' (random dot motion fills the gap). A reader could get the impression the name was given in the Kiani et al., 2013 study (page 3, paragraph 1: "This scenario has begun to be studied using an intermittent- evidence or "gaps" task (Kiani et al., 2013) ...") but this is not true, Kiani et al. never use the term "Gaps task", nor has any other study since (as far as I know).

We thank the reviewer for noting this oversight on our part - we have now made it clear that “gaps task” is the way we refer to the task originally developed by Kiani et al. 2013 in the introduction. We have decided to still use this name because it is a convenient proxy, in the understanding that “gap” refers to a “gap” in coherent motion as in Kiani et al (2013), albeit not a proper blank as in the original implementation.

-

eLife Assessment

This study resolves a cryo-EM structure of the GPCR, human GPR30, which responds to bicarbonate and regulates cellular responses to pH and ion homeostasis. Understanding the ligand and the mechanism of activation is important to the field of receptor signaling and potentially facilitates drug development targeting this receptor. Structures and functional assays provide solid evidence for a potential bicarbonate binding site.

-

Reviewer #1 (Public review):

[Editors' note: this version has been assessed by the Reviewing Editor without further input from the original reviewers.]

Summary:

This study resolves a cryo-EM structure of the GPCR, GPR30, in the presence of bicarbonate, which the author's lab recently identified as the physiological ligand. Understanding the ligand and the mechanism of activation is of fundamental importance to the field of receptor signaling. This solid study provides important insight into the overall structure and suggests a possible bicarbonate binding site.

Strengths:

The overall structure, and proposed mechanism of G-protein coupling are solid. Based on the structure, the authors identify a binding pocket that might accommodate bicarbonate. Although assignment of the binding pocket is speculative, extensive mutagenesis of residues …

Reviewer #1 (Public review):

[Editors' note: this version has been assessed by the Reviewing Editor without further input from the original reviewers.]

Summary:

This study resolves a cryo-EM structure of the GPCR, GPR30, in the presence of bicarbonate, which the author's lab recently identified as the physiological ligand. Understanding the ligand and the mechanism of activation is of fundamental importance to the field of receptor signaling. This solid study provides important insight into the overall structure and suggests a possible bicarbonate binding site.

Strengths:

The overall structure, and proposed mechanism of G-protein coupling are solid. Based on the structure, the authors identify a binding pocket that might accommodate bicarbonate. Although assignment of the binding pocket is speculative, extensive mutagenesis of residues in this pocket identifies several that are important to G-protein signaling. The structure shows some conformational differences with a previous structure of this protein determined in the absence of bicarbonate (PMC11217264). To my knowledge, bicarbonate is the only physiological ligand that has been identified for GPR30, making this study an important contribution to the field. However, the current study provides novel and important circumstantial evidence for the bicarbonate binding site based on mutagenesis and functional assays.

Weaknesses:

Bicarbonate is a challenging ligand for structural and biochemical studies, and because of experimental limitations, this study does not elucidate the exact binding site. Higher resolution structures would be required for structural identification of bicarbonate. The functional assay monitors activation of GPR30, and thus reports on not only bicarbonate binding, but also the integrity of the allosteric network that transduces the binding signal across the membrane. However, biochemical binding assays are challenging because the binding constant is weak, in the mM range.

The authors appropriately acknowledge the limitations of these experimental approaches, and they build a solid circumstantial case for the bicarbonate binding pocket based on extensive mutagenesis and functional analysis. However, the study does fall short of establishing the bicarbonate binding site.

-

Reviewer #2 (Public review):

Summary:

In this manuscript, "Cryo-EM structure of the bicarbonate receptor GPR30," the authors aimed to enrich our understanding of the role of GPR30 in pH homeostasis by combining structural analysis with a receptor function assay. This work is a natural development and extension of their previous work on Nature Communications (PMID: 38413581). In the current body of work, they solved the cryo-EM structure of the human GPR30-G-protein (mini-Gsqi) complex in the presence of bicarbonate ions at 3.15 Å resolution. From the atomic model built based on this map, they observed the overall canonical architecture of class A GPCR and also identified 3 extracellular pockets created by ECLs (Pockets A-C). Based on the polarity, location, size, and charge of each pocket, the authors hypothesized that pocket A is a …

Reviewer #2 (Public review):

Summary:

In this manuscript, "Cryo-EM structure of the bicarbonate receptor GPR30," the authors aimed to enrich our understanding of the role of GPR30 in pH homeostasis by combining structural analysis with a receptor function assay. This work is a natural development and extension of their previous work on Nature Communications (PMID: 38413581). In the current body of work, they solved the cryo-EM structure of the human GPR30-G-protein (mini-Gsqi) complex in the presence of bicarbonate ions at 3.15 Å resolution. From the atomic model built based on this map, they observed the overall canonical architecture of class A GPCR and also identified 3 extracellular pockets created by ECLs (Pockets A-C). Based on the polarity, location, size, and charge of each pocket, the authors hypothesized that pocket A is a good candidate for the bicarbonate binding site. To identify the bicarbonate binding site, the authors performed an exhaustive mutant analysis of the hydrophilic residues in Pocket A and analyzed receptor reactivity via calcium assay. In addition, the human GPR30-G-protein complex model also enabled the authors to elucidate the G-protein coupling mechanism of this special class A GPCR, which plays a crucial role in pH homeostasis.

Strengths:

As a continuation of their recent Nature Communications publication, the authors used cryo-EM coupled with mutagenesis and functional studies to elucidate bicarbonate-GPR30 interaction. This work provided atomic-resolution structural observations for the receptor in complex with G-protein, allowing us to explore its mechanism of action, and will further facilitate drug development targeting GPR30. There were 3 extracellular pockets created by ECLs (Pockets A-C). The authors were able to filter out 2 of them and hypothesized that pocket A was a good candidate for the bicarbonate binding site based on the polarity, location, and charge of each pocket. From there, the authors identified the key residues on GPR30 for its interaction with the substrate, bicarbonate. Together with their previous work, they mapped out amino acids that are critical for receptor reactivity.

Weaknesses:

When we see a reduction of a GPCR-mediated downstream signaling, several factors could potentially contribute to this observation: 1) a reduced total expression of this receptor due to the mutation (transcription and translation issue); 2) a reduced surface expression of this receptor due to the mutation (trafficking issue); and 3) a dysfunctional receptor that doesn't signal due to the mutation.

Altogether, the wide range of surface expression across the different cell lines, combined with the different receptor function readouts, makes the cell functional data only partially support their structural observations.

-

Reviewer #3 (Public review):

Summary

GPR30 responds to bicarbonate and plays a role in regulating cellular pH and ion homeostasis. However, the molecular basis of bicarbonate recognition by GPR30 remains unresolved. This study reports the cryo-EM structure of GPR30 bound to a chimeric mini-Gq in the presence of bicarbonate, revealing mechanistic insights into its G-protein coupling. Nonetheless, the study does not identify the bicarbonate-binding site within GPR30.

Strengths

The work provides strong structural evidence clarifying how GPR30 engages and couples with Gq.

Weaknesses

Several GPR30 mutants exhibited diminished responses to bicarbonate, but their expression levels were also reduced. As a result, the mechanism by which GPR30 recognizes bicarbonate remains uncertain.

-

Author Response:

The following is the authors’ response to the previous reviews

Reviewer #1 (Public review):

Summary:

This study resolves a cryo-EM structure of the GPCR, GPR30, in the presence of bicarbonate, which the author's lab recently identified as the physiological ligand. Understanding the ligand and the mechanism of activation is of fundamental importance to the field of receptor signaling. This solid study provides important insight into the overall structure and suggests a possible bicarbonate binding site.

Strengths:

The overall structure, and proposed mechanism of G-protein coupling are solid. Based on the structure, the authors identify a binding pocket that might accommodate bicarbonate. Although assignment of the binding pocket is speculative, extensive mutagenesis of residues in this pocket identifies several that are …

Author Response:

The following is the authors’ response to the previous reviews

Reviewer #1 (Public review):

Summary:

This study resolves a cryo-EM structure of the GPCR, GPR30, in the presence of bicarbonate, which the author's lab recently identified as the physiological ligand. Understanding the ligand and the mechanism of activation is of fundamental importance to the field of receptor signaling. This solid study provides important insight into the overall structure and suggests a possible bicarbonate binding site.

Strengths:

The overall structure, and proposed mechanism of G-protein coupling are solid. Based on the structure, the authors identify a binding pocket that might accommodate bicarbonate. Although assignment of the binding pocket is speculative, extensive mutagenesis of residues in this pocket identifies several that are important to G-protein signaling. The structure shows some conformational differences with a previous structure of this protein determined in the absence of bicarbonate (PMC11217264). To my knowledge, bicarbonate is the only physiological ligand that has been identified for GPR30, making this study an important contribution to the field. However, the current study provides novel and important circumstantial evidence for the bicarbonate binding site based on mutagenesis and functional assays.

Weaknesses:

Bicarbonate is a challenging ligand for structural and biochemical studies, and because of experimental limitations, this study does not elucidate the exact binding site. Higher resolution structures would be required for structural identification of bicarbonate. The functional assay monitors activation of GPR30, and thus reports on not only bicarbonate binding, but also the integrity of the allosteric network that transduces the binding signal across the membrane. However, biochemical binding assays are challenging because the binding constant is weak, in the mM range.

The authors appropriately acknowledge the limitations of these experimental approaches, and they build a solid circumstantial case for the bicarbonate binding pocket based on extensive mutagenesis and functional analysis. However, the study does fall short of establishing the bicarbonate binding site.

We thank the reviewer for this thoughtful and constructive assessment of our revised manuscript. We are grateful for the recognition of the overall quality of the cryo-EM structure and the proposed mechanism of G-protein coupling, as well as for highlighting the importance of identifying bicarbonate as a physiological ligand for GPR30 and the contribution this work makes to the receptor signaling field. We also appreciate the reviewer’s careful and balanced discussion of the inherent challenges posed by bicarbonate as a low-affinity, small, negatively charged ligand, and we fully agree that, given current experimental limitations, our data provide circumstantial—rather than definitive—evidence for the binding site and that higher-resolution structures would be required for direct visualization. Importantly, we value the reviewer’s acknowledgement that we transparently describe these limitations and that our extensive mutagenesis and functional analyses nonetheless build a solid case for the proposed bicarbonate-binding pocket, which we believe will serve as a useful framework for future biochemical and structural investigation

Reviewer #1 (Recommendations for the authors):

Overall, the authors do a good job responding to the previous review, with updated structures and experimental data. I have two comments on the current version:

(1) When the authors compare their structure to a previously published structure of the same receptor, they say that the previous structure came out while the current manuscript was in revision (line 255). This is not correct. The previous manuscript was published May 14, 2024, and the current manuscript was received by eLife on May 20, 2024. This sentence should be corrected to "During the preparation of this manuscript..."

We corrected the sentence accordingly (line 259).

(2) Line 173: what other structures are the authors referring to? Citations should be included here.

Is Line 193 correct? We added citations (line 190).

Reviewer #2 (Public review):

Summary:

In this manuscript, "Cryo-EM structure of the bicarbonate receptor GPR30," the authors aimed to enrich our understanding of the role of GPR30 in pH homeostasis by combining structural analysis with a receptor function assay. This work is a natural development and extension of their previous work on Nature Communications (PMID: 38413581). In the current body of work, they solved the cryo-EM structure of the human GPR30-G-protein (mini-Gsqi) complex in the presence of bicarbonate ions at 3.15 Å resolution. From the atomic model built based on this map, they observed the overall canonical architecture of class A GPCR and also identified 3 extracellular pockets created by ECLs (Pockets A-C). Based on the polarity, location, size, and charge of each pocket, the authors hypothesized that pocket A is a good candidate for the bicarbonate binding site. To identify the bicarbonate binding site, the authors performed an exhaustive mutant analysis of the hydrophilic residues in Pocket A and analyzed receptor reactivity via calcium assay. In addition, the human GPR30-G-protein complex model also enabled the authors to elucidate the G-protein coupling mechanism of this special class A GPCR, which plays a crucial role in pH homeostasis.

Strengths:

As a continuation of their recent Nature Communications publication, the authors used cryo-EM coupled with mutagenesis and functional studies to elucidate bicarbonate-GPR30 interaction. This work provided atomic-resolution structural observations for the receptor in complex with G-protein, allowing us to explore its mechanism of action, and will further facilitate drug development targeting GPR30. There were 3 extracellular pockets created by ECLs (Pockets A-C). The authors were able to filter out 2 of them and hypothesized that pocket A was a good candidate for the bicarbonate binding site based on the polarity, location, and charge of each pocket. From there, the authors identified the key residues on GPR30 for its interaction with the substrate, bicarbonate. Together with their previous work, they mapped out amino acids that are critical for receptor reactivity.

Weaknesses:

When we see a reduction of a GPCR-mediated downstream signaling, several factors could potentially contribute to this observation: 1) a reduced total expression of this receptor due to the mutation (transcription and translation issue); 2) a reduced surface expression of this receptor due to the mutation (trafficking issue); and 3) a dysfunctional receptor that doesn't signal due to the mutation. In the current revision, based on the gating strategy, the surface expression of the HA-positive WT GPR30-expressing cells is only 10.6% of the total population, while the surface expression levels of the mutants range from 1.89% (P71A) to 64.4% (D111A). Combining this information with the functional readout in Figure 3F and G, as well as their previous work, the authors concluded that mutations at P71, E115, D125, Q138, C207, D210, and H307 would decrease bicarbonate responses. Among those sites,

E115, Q138, and H307 were from their previous Nature Comm paper.

Authors claim P71 and C207 make a structural-stability contribution, as their mutations result in a significant reduction in surface expression: P71A (1.89%) and C207A (2.71%). However, compared to 10.6% of the total population in the WT, (P71A is 17.8% of the WT, and C207A is 25.6% of the WT), this doesn't rule out the possibility that the mutated receptor is also dysfunctional: at 10 mM NaHCO3, RFU of WT is ~500, RFU of P71 and C207 are ~0.

The authors also interpret "The D125ECL1A mutant has lost its activity but is located on the surface" and only mention "D125 is unlikely to be a bicarbonate binding site, and the mutational effect could be explained due to the decreased surface expression". Again, compared to 10.6% of the total population in the WT, D125A (3.94%) is 37.2% of the WT. At 10 mM NaHCO3, the RFU of the WT is ~500, the RFU of D125 is ~0. This doesn't rule out the possibility that the mutated receptor is also dysfunctional. It is not clear why D125A didn't make it to the surface.

Other mutants that the authors didn't mention much in their text: D111A (64.4%, 607.5% of WT surface expression), E121A (50.4%, 475.5% of WT surface expression), R122 (41.0%, 386.8% of WT surface expression), N276A (38.9%, 367.0% of WT surface expression) and E218A (24.6%, 232.1% of WT surface expression) all have similar RFU as WT, although the surface expression is about 2-6 times more. On the other hand, Q215A (3.18%, 30% of WT surface expression) has similar RFU as WT, with only a third of the receptor on the surface.

Altogether, the wide range of surface expression across the different cell lines, combined with the different receptor function readouts, makes the cell functional data only partially support their structural observations.

We sincerely thank the reviewer for their careful reading and thoughtful evaluation of our manuscript on the cryo-EM structure of the bicarbonate receptor GPR30. We greatly appreciate the reviewer’s positive assessment of the overall significance of combining structural determination with extensive mutagenesis and functional assays to advance understanding of bicarbonate–GPR30 interactions and G-protein coupling, as well as their recognition that these atomic-level insights will be valuable for future mechanistic studies and drug-development efforts. We are also grateful for the reviewer’s constructive critique regarding the interpretation of reduced signaling in the context of variable surface expression across mutants, which highlights an important point about disentangling effects of expression/trafficking from intrinsic receptor dysfunction; these comments are highly insightful and will help us strengthen the clarity and rigor of our presentation and conclusions in the revised manuscript.

Reviewer #2 (Recommendations for the authors):

In this revision, the authors have made a significant effort to improve and validate the structural observations, as well as address the comments in the previous submission. They updated the functional assays and evaluated the receptor function by measuring intracellular calcium mobilization, which is a more direct measurement for the downstream signaling of hGPR30-Gq signaling. They also used flow cytometry with an HA-antibody for a more direct measurement of the surface expression of the receptor, replacing their previous assay that normalized to the housekeeping gene Na-K-ATPase.

I appreciate the effort the authors made to address the previous comments made by the reviewers. However, there are still some concerns about the current data.

(1) The authors have addressed my previous comment on untangling the mixture of their previous and new data in the "insights into bicarbonate binding" section. They have made it clear that the importance of E115, Q138, and H307 in the receptor-bicarbonate interaction was shown in their Nature Communications paper.

(2) The authors have addressed my previous comment on adding some content about the physiological concentration of HCO3, or referring more to their previous work about the rationale to select the bicarbonate dose in their functional assay.

(3) The authors have updated Figure 3

(4) The authors have updated Supplemental Figure 1 to show the full gel with molecular weight markers in the supplemental data to demonstrate the sample purity.

(5) The authors have updated the predicted model using AF3

(6) The authors added E218A as suggested before.

Some new suggestions for this R1:

(1) The wide range of surface expression across the different cell lines, combined with the different receptor function readouts, makes the cell functional data only partially support their structural observations.

We acknowledge this limitation. The wide range of surface expression among cell lines, together with differences in assay modalities, may introduce variability that complicates direct quantitative comparisons and therefore only partially supports the structural observations. Future work using more standardized expression systems and matched functional readouts will be important to strengthen the structure–function linkage.

(2) Line 101, "ICL1 and ECL1 contain short α helices", no α helix of ICL1 is shown in Figure 2C

We removed the word “ICL1” (line 98).

(3) For the unsolved region of ECL2, could the author put a dashed line connecting ECL2 with TM4? In the current Figure 2B, it looks like ECL2 connects TM3 and TM5.

According to the suggestion, we corrected Figure 2B.

(4) I appreciate that the authors updated the predicted model with AF3, but they didn't make it clear why they had the comparison between their cryo-EM structure (bicarbonate-activated G-protein-incorporated GPR30) and the predicted AF3 model (inactive GPR30)

We wish to assert the usefulness of experimental structures, not merely predictions. These include structures independent of receptor activation, such as SS bonds.

(5) I appreciate that the authors have addressed my previous comment on adding some content about the physiological concentration of HCO3, but it was still not clear to me why they picked 11 mM in Figure 3G for the bar graph. Also, since a dose-response curve was made in Figure 3F, why not just calculate and report the EC50 of NaHCO3 for each mutant?

Thank you for your comment. Thank you for the comment. We’ve calculated the EC50 of the calcium response and assessed its correlation with receptors’ cell surface expression. We chose 11 mM in Fig .3G since our previous paper in Nature Communications showed the EC50 value of IPs assay was around 11 mM. However, the calcium response was more sensitive and gave a lower value than expected. Therefore, according to your advice, we deleted the bar graph with 11 mM responses, calculated EC50, and drew pictures of the correlation among cell surface expression, EC50, and maximum responses (Figure 3F-I, Supplementary File 1). Moreover, we revised the explanation about this mutagenesis study (lines139-154 and 217-230).

(6) In the previous submission and comments, E218 was in close contact with bicarbonate in the previous Figure 4D (the bicarbonate is deleted in the new structure). I thank the authors for making an E218A mutant and performing the functional assay. As mentioned above, E218A (24.6%, 232.1% of WT surface expression) has a similar functional readout as WT. Doesn't this also indicate that E218A is partially broken, so you will need twice as much as WT to have the same downstream signal?

Thank you for your comment. In our revised manuscript, we described the correlation between cell surface expression and EC50 and found that cell surface expression and the response to bicarbonate are not correlated, which you mentioned in your review comment (Figure 3F-I, Supplementary File 1). There are many possibilities that could explain this: GPR30 localization in specific spots on the plasma membrane might limit the response stoichiometry, GPR30 might also work intracellularly to blunt the increased response because of more GPR30 expression on PM, redundant GPR30 on PM might be broken, or E118A might be less functional and need twice as much as WT. We will examine cell surface expression of GPR30 and its response to bicarbonate in a future study.

I would suggest that the authors in future studies consider using the Tet-on inducible cell lines, such as HEK293 Flp-In Trex. These cell lines will allow the authors to fine-tune the surface expression of their mutants to the same level with different doses of Tetracycline in their stable cell lines.

We appreciate your advice. We’ll introduce Tet-on inducible cell lines for future research.

Reviewer #3 (Public review):

Summary

GPR30 responds to bicarbonate and plays a role in regulating cellular pH and ion homeostasis. However, the molecular basis of bicarbonate recognition by GPR30 remains unresolved. This study reports the cryo-EM structure of GPR30 bound to a chimeric mini-Gq in the presence of bicarbonate, revealing mechanistic insights into its G-protein coupling. Nonetheless, the study does not identify the bicarbonate-binding site within GPR30.

Strengths

The work provides strong structural evidence clarifying how GPR30 engages and couples with Gq.

Weaknesses

Several GPR30 mutants exhibited diminished responses to bicarbonate, but their expression levels were also reduced. As a result, the mechanism by which GPR30 recognizes bicarbonate remains uncertain, leaving this aspect of the study incomplete.

We sincerely thank the reviewer for this thoughtful and balanced assessment of our manuscript, including the clear summary of the central advance and the constructive identification of remaining limitations. We particularly appreciate the recognition that our cryo-EM analysis provides strong structural evidence for how GPR30 engages and couples with Gq, and we agree that pinpointing the bicarbonate-binding site remains a critical open question. In the revised manuscript, we will make this point more explicit, clarify the interpretation of the mutagenesis results in light of reduced receptor expression for some variants, and further strengthen the presentation and discussion of what our current data do—and do not—allow us to conclude regarding bicarbonate recognition by GPR30

Reviewer #3 (Recommendations for the authors):

The authors have removed the bicarbonate assignment from their model and have addressed all of my concerns. In this study, or in future work, it would be advisable for the authors to explore the use of bicarbonate mimetics with higher binding affinity to facilitate more definitive structural characterization.

Thank you for this constructive suggestion. We agree that exploring bicarbonate mimetics with higher binding affinity would be an important next step to enable more definitive structural characterization of GPR30 and to strengthen mechanistic conclusions. In future work, we plan to pursue the identification and/or design of such mimetics, guided by the architecture and mutational landscape of the extracellular pocket described here, and to combine these ligands with optimized cryo-EM sample preparation and complementary functional assays to better stabilize and visualize the bound state.

-

eLife Assessment

This study presents valuable findings on the physiological and computational underpinnings of the accumulation of intermittent glimpses of sensory evidence. While the authors present solid evidence to support their claims, a more exhaustive characterisation of how the different signals interact could further strengthen their case. The work will be of interest to cognitive and systems neuroscientists working on decision-making

-

Reviewer #1 (Public review):

Summary:

This paper aims to characterise the physiological and computational underpinnings of the accumulation of intermittent glimpses of sensory evidence.

Strengths:

(1) Elegant combination of electroencephalography and computational modelling.

(2) The authors describe results of two separate experiments, with very similar results, in effect providing an internal replication.

(3) Innovative task design, including different gap durations.

Weaknesses:

(1) The authors introduce the CPP as tracking an intermediary (motor-independent) evidence integration process, and the MBL as motor preparation that maintains a sustained representation of the decision variable. It would help if the authors could more directly and quantitatively assess whether their current data are in line with this. That is, do these signals …

Reviewer #1 (Public review):

Summary:

This paper aims to characterise the physiological and computational underpinnings of the accumulation of intermittent glimpses of sensory evidence.

Strengths:

(1) Elegant combination of electroencephalography and computational modelling.

(2) The authors describe results of two separate experiments, with very similar results, in effect providing an internal replication.

(3) Innovative task design, including different gap durations.

Weaknesses:

(1) The authors introduce the CPP as tracking an intermediary (motor-independent) evidence integration process, and the MBL as motor preparation that maintains a sustained representation of the decision variable. It would help if the authors could more directly and quantitatively assess whether their current data are in line with this. That is, do these signals exhibit key features of evidence accumulation (slope proportional to evidence strength, terminating at a common amplitude that reflects the bound)? Additionally, plotting these signals report locked (to the button press) would help here. What do the results mean for the narrative of this paper?

(2) The novelty of this work lies partly in the aim to characterize how the CPP and MBL interact (page 5, line 3-5). However, this analysis seems to be missing. E.g., at the single-trial level, do relatively strong CPP pulses predict faster/larger MBL? The simulations in Figure 5 are interesting, but more could be done with the measured physiology.

(3) The focus on CPP and MBL is hypothesis-driven but also narrow. Since we know only a little about the physiology during this "gaps" task, have the authors considered computing TFRs from different sensor groupings (perhaps in a supplementary figure?).